Newline produces effective courses for aspiring lead developers

Explore wide variety of content to fit your specific needs

article

NEW RELEASE

Free

AI Learned from 20 Project

Watch: 20+ free AI agent project examples by Sabrina Ramonov 🍄 Learning from AI projects accelerates skill development and organizational growth by turning abstract concepts into actionable insights. When professionals engage with hands-on projects, they not only deepen their technical…

article

NEW RELEASE

Free

Why Most AI-Built Products Fail

Watch: Why AI Fails When Product Strategy Is Broken? by TechDailyAI Understanding why AI-built products fail is critical for businesses and developers aiming to avoid costly mistakes. Industry data reveals staggering failure rates-90% of startups fail because they build products no one wants, and…

article

NEW RELEASE

Free

Why Low‑Resource NLP Still Struggles with Annotation

Low-resource NLP struggles with annotation because the vast majority of languages lack sufficient labeled datasets, which are critical for training accurate models. Over 2,144 languages exist in Africa alone, but only 64 are included in major NLP benchmarks. As mentioned in the Scarcity of…

article

NEW RELEASE

Free

Why LLM Summaries Fail Without Identification

Identification is the linchpin that determines whether LLM summaries deliver reliable insights or propagate errors. Without a structured process to identify and validate facts, summaries risk hallucinations-fabricated details that distort meaning and erode trust. As mentioned in the Understanding…

article

NEW RELEASE

Free

Why 80% of US AI Startups Switched to Chinese Models

Watch: Chinese AI startups see progress amid U.S. AI trade concerns by CNBC Television The shift of 80% of U.S. AI startups to Chinese models reshapes the AI market, driven by cost efficiency, performance, and strategic advantages. Chinese open-source models like Alibaba’s Qwen and DeepSeek’s R1…

course

Bootcamp

AI bootcamp 2

This advanced AI Bootcamp teaches you to design, debug, and optimize full-stack AI systems that adapt over time. You will master byte-level models, advanced decoding, and RAG architectures that integrate text, images, tables, and structured data. You will learn multi-vector indexing, late interaction, and reinforcement learning techniques like DPO, PPO, and verifier-guided feedback. Through 50+ hands-on labs using Hugging Face, DSPy, LangChain, and OpenPipe, you will graduate able to architect, deploy, and evolve enterprise-grade AI pipelines with precision and scalability.

course

Pro

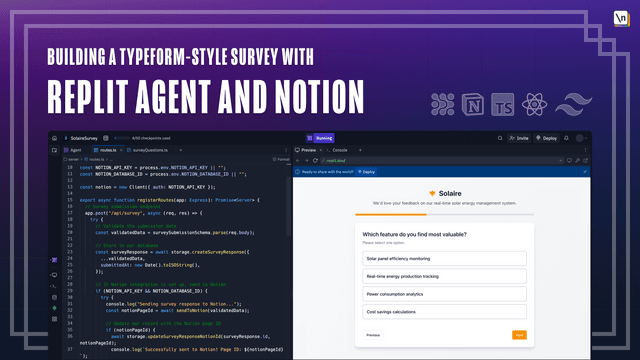

Building a Typeform-Style Survey with Replit Agent and Notion

Learn how to build beautiful, fully-functional web applications with Replit Agent, an advanced AI-coding agent. This course will guide you through the workflow of using Replit Agent to build a Typeform-style survey application with React and TypeScript. You will learn effective prompting techniques, explore and debug code that's generated by Replit Agent, and create a custom Notion integration for forwarding survey responses to a Notion database.

course

Pro

30-Minute Fullstack Masterplan

Create a masterplan that contains all the information you'll need to start building a beautiful and professional application for yourself or your clients. In just 30 minutes you'll know what features you'll need, which screens, how to navigate them, and even how your database tables should look like

course

Pro

Lightspeed Deployments

Continuation of 'Overnight Fullastack Applications' & 'How To Connect, Code & Debug Supabase With Bolt' - This workshop recording will show you how to take an app and deploy it on the web in 3 different ways All 3 deployments will happen in only 30 minutes (10 minutes each) so you can go focus on what matters - the actual app

book

Pro

Fullstack React with TypeScript

Learn Pro Patterns for Hooks, Testing, Redux, SSR, and GraphQL

book

Pro

Security from Zero

Practical Security for Busy People

book

Pro

JavaScript Algorithms

Learn Data Structures and Algorithms in JavaScript

book

Pro

How to Become a Web Developer: A Field Guide

A Field Guide to Your New Career

book

Pro

Fullstack D3 and Data Visualization

The Complete Guide to Developing Data Visualizations with D3

EXPLORE RECENT TITLES BY NEWLINE

Expand your skills with in-depth, modern web development training

Our students work at

Stop living in tutorial hell

Binge-watching hundreds of clickbait-y tutorials on YouTube. Reading hundreds of low-effort blog posts. You're learning a lot, but you're also struggling to apply what you've learned to your work and projects. Worst of all, uncertainty looms over the next phase of your career.

How do I climb the career engineering ladder?

How do I continue moving toward technical excellence?

How do I move from entry-level developer to senior/lead developer?

Learn from senior engineers who've been in your position before.

Taught by senior engineers at companies like Google and Apple, newline courses are hyper-focused, project-based tutorials that teach students how to build production-grade, real- world applications with industry best practices!

newline courses cover popular libraries and frameworks like React, Vue, Angular, D3.js and more!

With over 500+ hours of video content across all newline courses, and new courses being released every month, you will always find yourself mastering a new library, framework or tool.

At the low cost of $40 per month, the newline Pro subscription gives you unlimited access to all newline courses and books, including early access to all future content. Go from zero to hero today! 🚀

Level up with the newline pro subscription

Ready to take your career to the next stage?

newline pro subscription

- Unlimited access to 60+ newline Books, Guides and Courses

- Interactive, Live Project Demos for every newline Book, Guide and Course

- Complete Project Source Code for every newline Book, Guide and Course

- 20% Discount on every newline Masterclass Course

- Discord Community Access

- Full Transcripts with Code Snippets

Explore newline courses

Explore our courses and find the one that fits your needs. We have a wide range of courses from beginner to advanced level.

Explore newline books

Explore our books and find the one that fits your needs.

Newline fits learning into any schedule

Your time is precious. Regardless of how busy your schedule is, newline authors produce high-quality content across multiple mediums to make learning a regular part of your life.

Have a long commute or trip without any reliable internet connection options?

Download one of the 15+ books. Available in PDF/EPUB/MOBI formats for accessibility on any device

Have time to sit down at your desk with a cup of tea?

Watch over 500+ hours of video content across all newline courses

Only have 30 minutes over a lunch break?

Explore 1-minute shorts and dive into 3-5 minute videos, each focusing on individual concepts for a compact learning experience.

In fact, you can customize your learning experience as you see fit in the newline student dashboard:

Building a Beeswarm Chart with Svelte and D3

Connor RothschildGo To Course →Hovering over elements behind a tooltip

Connor explains how setting the CSS property pointer-events to none allows users to hover over elements behind a tooltip in SVG data visualizations.

newline content is produced with editors

Providing practical programming insights & succinctly edited videos

All aimed at delivering a seamless learning experience

Find out why 100,000+ developers love newline

See what students have to say about newline books and courses

José Pablo Ortiz Lack

Full Stack Software Engineer at Pack & Pack

I got a job offer, thanks in a big part to your teaching. They sent a test as part of the interview process, and this was a huge help to implement my own Node server.

This has been a really good investment!

Meet the newline authors

newline authors possess a wealth of industry knowledge and an infinite passion for sharing their knowledge with others. newline authors explain complex concepts with practical, real-world examples to help students understand how to apply these concepts in their work and projects.

Level up with the newline pro subscription

Ready to take your career to the next stage?

newline pro subscription

- Unlimited access to 60+ newline Books, Guides and Courses

- Interactive, Live Project Demos for every newline Book, Guide and Course

- Complete Project Source Code for every newline Book, Guide and Course

- 20% Discount on every newline Masterclass Course

- Discord Community Access

- Full Transcripts with Code Snippets

LOOKING TO TURN YOUR EXPERTISE INTO EDUCATIONAL CONTENT?

At newline, we're always eager to collaborate with driven individuals like you, whether you come with years of industry experience, or you've been sharing your tech passion through YouTube, Codepens, or Medium articles.

We're here not just to host your course, but to foster your growth as a recognized and respected published instructor in the community. We'll help you articulate your thoughts clearly, provide valuable content feedback and suggestions, all towards publishing a course students will value.

At newline, you can focus on what matters most - sharing your expertise. We'll handle emails, marketing, and customer support for your course, so you can focus on creating amazing content

newline offers various platforms to engage with a diverse global audience, amplifying your voice and name in the community.

From outlining your first lesson to launching the complete course, we're with you every step of the way, guiding you through the course production process.

In just a few months, you could not only jumpstart numerous careers and generate a consistent passive income with your course, but also solidify your reputation as a respected instructor within the community.

Comments (3)